5 min read

Securing Your Secrets in AWS using Hashicorp Vault

Hashicorp Vault

In an environment where infrastructure is immutable when patching or scaling your instances, there may be a need to pass secrets around, whether that be passwords for database connections or TLS certificates.

For example, if you need to register your instances with an internal DNS that uses Active Directory, you may need to provide credentials for an authenticated user at boot time, or you may need to place your TLS chain and private key in your Nginx configuration. As we want the instances to be able to do this at launch without exposing our secrets, we need a way to keep them secure whilst allowing our instances to be able to read them at build time.

There is where Vault comes in. Vault is an open-source tool created by Hashicorp for securely storing secrets while controlling access to passwords and certificates. This allows security in a dynamic infrastructure where applications and servers are ephemeral.

Whilst there are many native tools for storing secrets, Vault provides a centralised security solution; it’s a secrets store, key management system, encryption system and, PKI system. It provides all these things in one box making it easier to secure and to have access control for one solution. As Vault can run anywhere, it enables evolution over time for your environment while having control of that data and most importantly be able to replicate it in other regions, other data centres, and other Cloud providers.

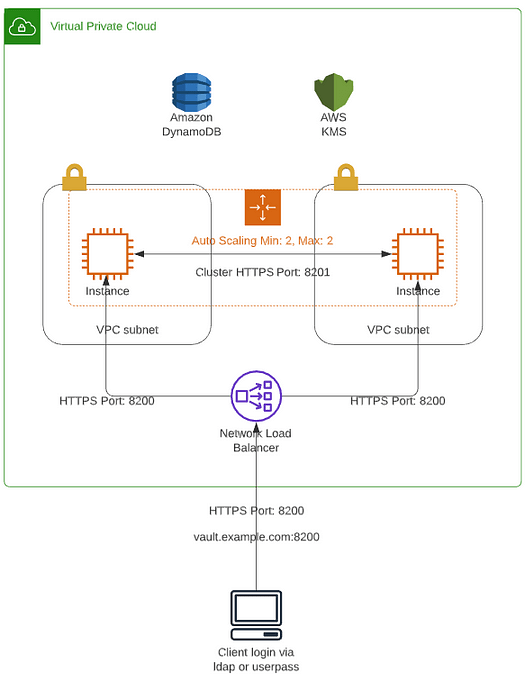

Above is an architectural overview of a possible Vault High availability deployment within AWS. The two Vault servers are launched via an Auto Scaling Group, that sits behind an internal load balancer with the storage backend for Vault data being provided by a DynamoDB table that is encrypted using a KMS CMK.

When Vault is first initialized, it generates a master key which is split into multiple shares (the number of shares that the key is split into is defined at build time along with the number of the shares required to recover the key.

For example, you may split it into 8 shares, but only require 5 shares to recover). Every time a vault node is started (or a new node is added), it needs to be unsealed. This is because it does not yet have the master key in memory, meaning it cannot decrypt its own data (known as sealed). To unseal Vault, you can use a manual process by getting operators to provide the number of required shares to regenerate the master key. Having a manual process each time a node is restarted is not ideal in a dynamic environment. Luckily, we can use auto-unseal which can delegate the unseal process to AWS using KMS. This encrypts and decrypts the whole master key, removing the need for manual input of the shares.

It should be noted that in the event of the auto-unseal failing, or if you need to generate a new root token for root access to Vault, you will need to revert to using the recovery shares via the manual method. As such a process should be in place on Vault install to encrypt and share with nominated operators.

One such option is to use PGP encryption, using operators public PGP keys to encrypt ‘their’ share which only they can decrypt in the event of manual intervention.

Authenticating AWS Instances with Vault. To enable instances to log into Vault and obtain the required secrets at build time they need to obtain a token that will allow them access to the Vault. Vault’s AWS authentication method provides an automated mechanism to retrieve a Vault token, negating the need for provision security-sensitive credentials by operators.

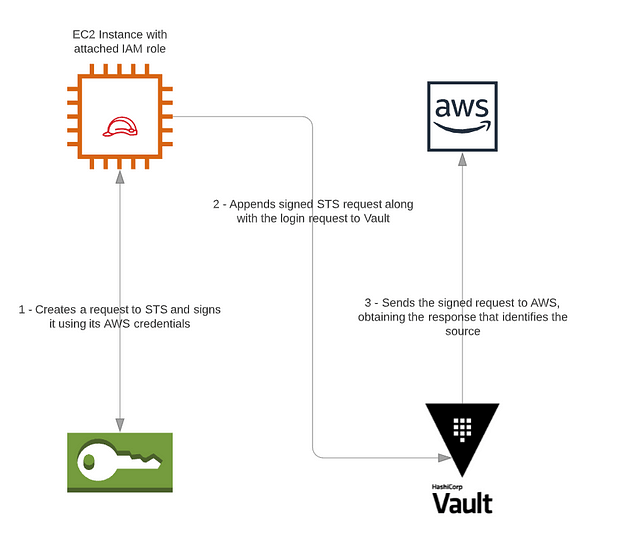

The AWS method has an authentication type of IAM — with this method, a special AWS request signed with AWS IAM credentials is used for authentication. The IAM credentials are automatically supplied to AWS instances in IAM instance profiles and it is this information already provided by AWS which Vault can use to authenticate clients.

The Solution

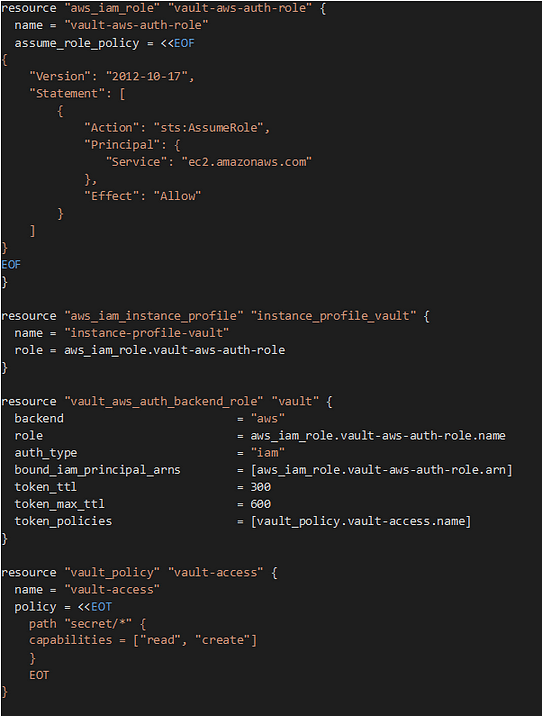

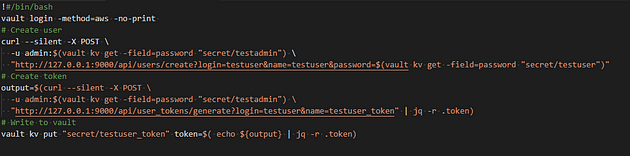

By using Hashicorp Vault along with its AWS auth method, we can have instances authenticate with Vault at launch and get the passwords and certificates required. To create the Vault AWS auth backend roles and policies required by our instances, we can either use the Vault CLI. If your infrastructure is built using Hashicorp Terraform, there are resources available to create them when building your infrastructure. Below is some example Terraform code that creates an AWS IAM role, instance profile and a Vault backend role and policy.

If this role was attached to an instance it would be able to log into Vault and read and create secrets under the secret/path. Calls to Vault can then be made in the user data of that instance without exposing any of our secrets.

What’s Next?

As we all know, passwords and certificates should and need to be rotated regularly to increase security. If these are updated while our services are running, we don’t want to be going from server to server updating certificates and passwords. When using Vault, you can update these using Vault agent and templates, which allows Vault secrets to be rendered to local files. Further information on this can be found here.

About the Author

Gareth Norman is a DevOps Engineer at Version 1, working with clients to help develop and build innovative Cloud solutions. Stay tuned to Version 1 on Medium for more posts from Gareth.

About Version 1

Version 1 is a leader in Enterprise Cloud services and was one of the first AWS Consulting Partners in Europe. We are an AWS Premier Partner and specialise in migrating and running complex enterprise workloads in Public Cloud. Version 1 is a leader in Enterprise Cloud services and was one of the first AWS Consulting Partners in Europe. We have a policy of continuous investment in technology solutions that benefit our customers and are in the small number Amazon Web Services Partners to have achieved advanced partner status. Our team works closely with AWS to help our customers navigate the rapidly changing world of IT.