8 min read

AI Metrics: The Science and Art of Measuring Artificial Intelligence

- What can businesses gain by using AI metrics and evaluation?

- What are the types of AI evaluation metrics?

- Classification metrics

- Regression metrics

- Ethics metrics

- AI metrics evaluation process

- Conclusion

What can businesses gain by using AI metrics and evaluation?

Strategic Alignment with AI and smart KPIs

Using AI, organisations can create forward-looking smart KPIs. Business leaders acknowledge that they need new measurement capabilities and improved metrics to better anticipate and navigate strategic opportunities and threats. AI can help prioritise, organise, share, improve accuracy and detail, and the predictive capabilities of KPIs. The improvements individually and collectively generate greater situational awareness and can improve how corporate functions work together to achieve strategic outcomes.

For businesses handling large codebases and legacy systems, tools like Decipher provide AI-powered documentation and analysis, significantly accelerating modernisation efforts while ensuring strategic alignment.

NIST’s Approach to AI Metrics and Evaluation – A Framework for Businesses

The National Institute of Standards and Technology (NIST) is a United States federal agency that conducts research and development of metrics, measurements, and evaluation methods in emerging and existing areas of AI. NIST’s efforts include advancing the measurement science for AI by defining, characterising, theoretically and empirically developing, and analysing quantitative and qualitative metrics and measurement methods for various characteristics of AI technologies.

The NIST also provides a framework to help businesses manage the risks associated with AI systems. Its programmes aim to advance the state-of-the-art in AI technologies and nurture trust in their design, development, use, and governance.

Using AI, organisations can create and measure forward-looking smart KPIs.

What are the types of AI evaluation metrics?

AI-based systems are measured using evaluation metrics to evaluate their performance. These metrics determine whether an AI model is performing within the right parameters and standards. Let us examine three types of evaluation metrics: classification, regression and ethics.

Classification Metrics

A classification metric is a number that evaluates the performance of AI models, especially in the context of tasks like binary or multiclass classification. This metric helps to assess how well a model is making predictions and if its output is sensible. The most common classification metrics are accuracy, precision and recall, among other metrics.

Accuracy measures the ratio of true results (both true positives and true negatives) among the total number of cases examined. It is often used for classification problems.

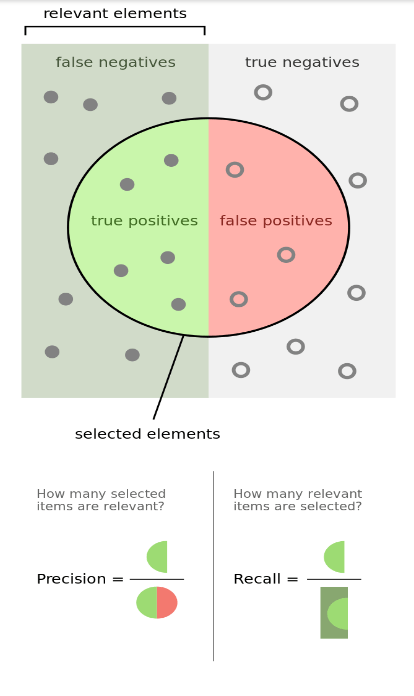

Precision is the ratio of true positive results among the total number of positive results (true positives and false positives) predicted by the model. It is a measure of the accuracy of positive predictions.

Recall or Sensitivity/True Positive Rate measures the ratio of true positives to the sum of true positives and false negatives (relevant items that are incorrectly identified). It is a measure of the model’s ability to identify all relevant instances.

Figure 1. Positives and negatives

F1 Score is the harmonic mean of precision and recall, providing a balance between them. It is particularly useful in situations where either precision or recall may be more important.

Area Under ROC (Receiver Operating Characteristic) Curve (AUC-ROC) measures the entire two-dimensional (multi-class) area underneath the entire ROC curve.

Regression Metrics

Regression Metrics evaluate the performance of AI regression models which are used for predicting a numeric value. Some regression metrics among the many other measurement metrics include Mean Absolute Error, Mean Squared Error, etc. A list of 101 metrics can be found here.

Some metrics include:

Mean Absolute Error (MAE) is the average of the absolute differences between predicted and actual values.

Mean Squared Error (MSE) is the average of the squared differences between predicted and actual values.

Root Mean Squared Error (RMSE) is the square root of MSE and gives a relatively high weight to large errors.

Ethics Metrics

Ethics Metrics can help evaluate the ethical measures if in place and to mitigate the unethical practices that might have been unintentional in the development of AI systems.

Some ethical issues within AI could be bias, a lack of transparency, unknowingly perpetuating societal prejudices, to name a few.

List of AI Ethics Metrics

The Foundation Model Transparency Index is like a report card that grades the transparency of creators of powerful AI models at different stages and adapts over time. The goal is to make conversations about these models clearer and more backed by data. It encourages developers to share more information about how they build and use their models. It also outlines the important aspects of transparency and helps track whether developers are becoming more transparent over time.

The index is detailed and includes 100 different measures to assess transparency, divided into three main categories:

1. Upstream indicators (32) i.e., before building the model this indicator focusses on aspects such as the data used to train the model, people involved in collecting the data, and how people use computer resources. They also consider the methods used to train the model and how developers deal with issues like data privacy and copyright.

2. Model indicators (33) examine the characteristics of the model itself. This includes basic information about the model like its size and structure, as well as whether there are clear evaluations about it.

3. Downstream indicators (35) look at what happens after the model is built, such as how it is used, who uses it, and how the developers handle issues like data privacy and copyright in applications that use the model.

IBM’s Artificial Intelligence Explainability 360 (AIX360) offers a comprehensive set of fairness metrics for datasets and models, along with explanations and bias mitigation algorithms. It also provides an interactive web experience for non-technical users to grasp fairness concepts and offers extensive documentation, usage guidance, and industry-specific tutorials for data scientists.

AIF360’s architecture is designed to align with common data science practices, making it user-friendly and extensible for researchers and developers. It ensures code quality with built-in testing.

The Adversarial Robustness Toolbox (ART) can be used to assess and defend Machine Learning models against combative threats. These threats include evasion, poisoning, extraction, and inference. These attacks aim to steal the trained model’s parameters or replicate its capabilities. By defending against these attacks, ART helps maintain the privacy of the data used to train the model.

ART is compatible with a wide range of widely used machine learning frameworks, such as TensorFlow, Keras, PyTorch, MXNet, scikit-learn, XGBoost, LightGBM, CatBoost, GPy, and others. It can handle various data types including but not limited to images, tables, audio, and video. Furthermore, it supports numerous machine learning tasks like classification, object detection, speech recognition, generation, certification and more.

Autonomy can be divided into two dimensions. This framework is instrumental in evaluating the degree of autonomy in AI systems and addressing ethical, legal, and safety considerations related to autonomous technology.

The first dimension of autonomy focuses on self-organisation, where autonomy emerges when a system forms a unified whole that cannot be broken down into separate parts. Integrated Information Theory quantifies a system’s irreducibility by comparing the statistical distribution of causal dependencies within the system to partitions that separate it into independent parts, measuring its ability to affect its components and processes.

The second dimension of autonomy deals with a system’s ability to exercise self-control and interact with its environment while maintaining some independence. To achieve this, a system should demonstrate control over its environment, even while connected to it. The Non-Trivial Information Closure (NTIC) measure tracks control by quantifying the disparity between a system’s self-predictive information about its future actions and their environmental impact and is reflected by a high NTIC value.

These can be applied in a few ways, for example,

1. To evaluate or standardise the current AI system’s autonomy and scientifically focus on concerns (rights, responsibility, risks etc.) linked to self-governing systems as there is a heightened intermingling with human surroundings. This is particularly important to businesses when considering developing autonomous systems.

2. To drive or stop AI systems development concerning the extension of autonomy levels. This can benefit non-AI professionals by enhancing overall efficiency and productivity, while also offering a means to regulate AI autonomy in response to potential concerns or issues.

AI Consultancy and Implementation Services

Version 1 offers AI consultancy and implementation services with specialists available to support your organisation in maximising productivity by utilising AI, from POC to deployment.

AI Metrics Evaluation Process

The evaluation process of an AI model involves several steps, including creating a validation strategy, choosing the right evaluation metrics, carefully tracking experiment results, and comparing experiment results

Validation Strategy: This is how the data is split to estimate future test performance. It could be as simple as a train-test split or a complex stratified k-fold strategy.

Right Evaluation Metric: Understand the business case behind the model and try to use the machine learning metric that correlates with that. Typically, no one metric is ideal for the problem. So, calculate multiple metrics and make the decisions based on that.

Tracking Experiment Results: Whether a spreadsheet is used or a dedicated experiment tracker, make sure to log all important metrics, learning curves, dataset versions, and configurations. Tools like Decipher can assist in AI-powered code analysis and documentation, offering visibility into model behaviours and code quality, which is crucial for comprehensive evaluation and compliance.

Compare Experiment Results: Irrespective of the metrics and validation approach used, the aim is to identify the best-performing model. However, it is important to note that there might not be a single “best” model, but rather some that meet the required standards.

Remember, the evaluation strategy choice depends on the type of AI model used (such as classification, regression etc.) and the specific requirements including the ethics of the project.

Conclusion

Evaluating AI-based systems is a multifaceted process that involves a variety of metrics. Some of the classification and regression metrics include Accuracy, Precision, Recall, F1 Score, AUC-ROC, MAE, MSE, RMSE and others often used to assess the performance of these systems depending on the specific requirements of the task at hand.

In addition to these technical metrics, ethical concerns are also crucial in AI development and deployment. Tools like the Foundation Model Transparency Index, IBM’s AIX360, ART, and the framework of two dimensions of autonomy provide inclusive methods for assessing transparency, bias, and autonomy in AI models. These tools encourage developers to be more transparent about their methods and help mitigate unethical practices that might unintentionally arise during the development process.

For corporates, utilising AI-driven metrics and smart KPIs enhances strategic alignment and performance insights, enabling organisations to better anticipate and navigate opportunities and threats. Meaning, organisations that incorporate AI performance metrics to improve or create KPIs yield greater business benefits.

At Version 1 our AI Labs are at the forefront of using and testing cutting edge AI technologies. Our current areas of expertise include Generative AI, Computer Vision, Machine and Deep Learning, Natural Language Processing, Conversational AI and Sustainable AI to name a few.