4 min read

An Analysis of OpenAI’s GPT-4

Is GPT-4 OpenAI’s Most Advanced System Yet?

Read time: 4 min

In our recent blog, we provided a full analysis of GPT3.5 from OpenAI and how it can best be used for your business. Now, GPT-4 is here, bringing all new features which build on the previous model. Let’s dive in and see what has changed.

Below you can watch the livestream where Greg Brockman, President and Co-Founder of OpenAI introduced all the features of the new release.

What’s New in GPT-4

GPT-4 is the latest of the GPT models and outperforms previous large language models in most areas including code generation, logical and mathematical reasoning. We haven’t tested the model extensively against the older versions yet but based on the demonstration by OpenAI, GPT-4 can solve complex math problems such as providing Tax Advice based on a set of tax rules and also generate better code when compared to GPT-3.5. OpenAI says that 70% of the prompts generated by GPT-4 were preferred over GPT 3.5 from their evaluation. GPT-4 also outperforms GPT-3.5 in most academic areas including exams such LSAT, Bar Exams, etc.

Another change is that GPT-4 is only available to ChatGPT Plus subscribers right now. Users must pay a monthly fee to access the most up to date features. Some companies have integrated it into their services at launch, including the Microsoft Bing chatbot. OpenAI also claims that the latest model is 82% less likely to show results for disallowed content and 40% more likely to give factual results that the previous iteration. This will help the company to address criticism about false information provided by the model.

More Context for Better Results

One of the biggest improvements in the latest model is the larger context length. GPT-4 has two model variants of 8,192 tokens and 32,768 tokens (equal to around 52 pages of text). This is almost 8 times more than GPT-3.5 at a maximum token length of 4,096 and allows users to process more text and perform tasks such as long document summarisation. Both versions have been trained with data up to September 2021, the same as previous models. For a basic user, the difference between GPT-3.5 and GPT-4 might be negligible but business processes that require complex reasoning would perform better in GPT-4 with the large context length allowing the model to scale for business needs.

Input Image & Output Text

Another significant breakthrough is that GPT-4 can accept images as inputs. This means if you have data in a visual form like a photograph or even physical data, it could convert them directly using the model and generate insights, summaries, QnA, etc. Currently, this feature is being tested by OpenAI in collaboration with Be My Eyes to help the visually impaired, however once released this feature could be used to extrapolate and analyse any image you might require based on the business requirement.

For example, a car manufacturing plant inspector could upload photos of available materials in the plant and extrapolate what materials are required or missing for production. OpenAI demonstrated the code generation and image capabilities by asking GPT-4 to write a piece of HTML code by sending the instructions written on a piece of paper and uploaded as an image.

In an impressive demonstration, OpenAI showed a handwritten note being turned into a functional website in seconds.

I just watched GPT-4 turn a hand-drawn sketch into a functional website.

This is insane. pic.twitter.com/P5nSjrk7Wn

Pricing and Availability

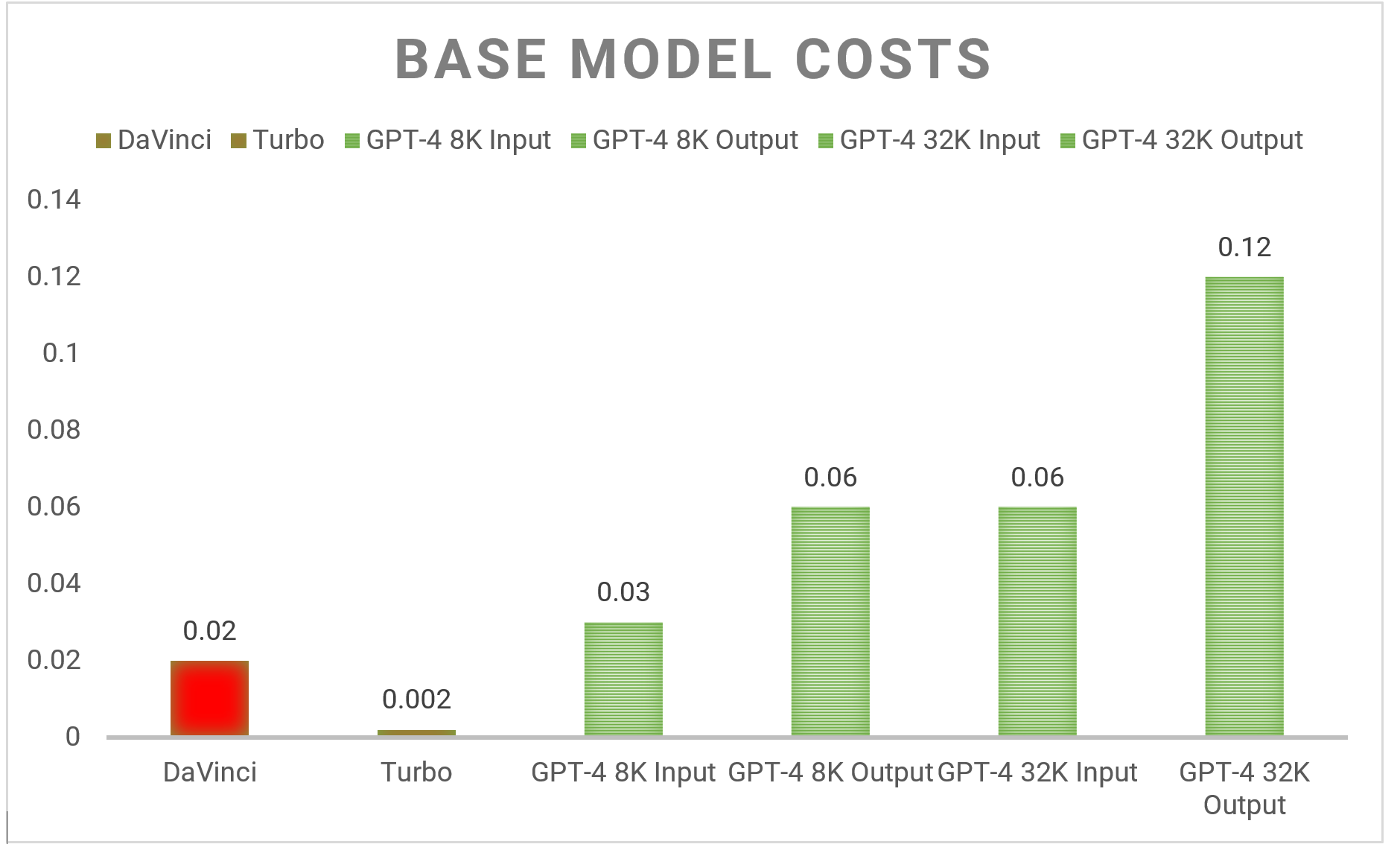

Naturally given the fact that GPT-4 is more computationally intensive than previous models it is also more expensive to use compared to GPT 3.5 Turbo – see pricing here. For example, GPT-4 costs up to $0.03 for 1K tokens at the prompt stage compared to $0.002 for the GPT-3.5-turbo model. It also charges different amounts for the prompt (input) and completion (output) stages. The below chart shows how the model compares with previous models such as DaVinci and Turbo.

Are you ready for GPT-4?

In conclusion, GPT-4 has been shown to outperform previous large language models, including GPT-3.5, with a greater ability to follow a user’s intent. The model has two variants with a much larger context length than previous models, allowing for the processing of longer texts and complex reasoning. The ability of GPT-4 to accept images as inputs is a breakthrough and has great potential for various industries. Overall, GPT-4 marks a significant advancement in language modelling and opens new possibilities for various business processes.

Find out more about the work we’re doing in AI through our Innovation Labs.

Follow us on Medium for more on emerging technology.